The Conversation: Building a Branching Scenario from Concept to Deploy in One Day

A visual novel-style Storyline module targeting new people managers, designed to prove that empathy-driven soft skills training can be engaging, polished, and instructionally rigorous without a massive budget or timeline.

Timeline

~1 Day

Tool

Storyline 360

Format

4 Decision Points

AI-Assisted

Midjourney + Claude

Images Generated

~140

The Problem I Was Solving

This was a deliberate portfolio piece designed to fill a gap. I already had CyberWise (a custom-built, gamified cybersecurity module) in my portfolio, which demonstrated technical depth and complex interactivity. What I needed was a complementary piece that showed:

- • Tool fluency with Storyline 360, the industry standard

- • Soft skills domain - people management, difficult conversations

- • Scenario writing and meaningful consequences carrying the experience on their own

- • Tight scope execution - polished work shipped fast

The two pieces together tell a story: I can build from scratch when the project demands it, and I can deliver within standard tooling when it doesn't. Technical range + design fundamentals.

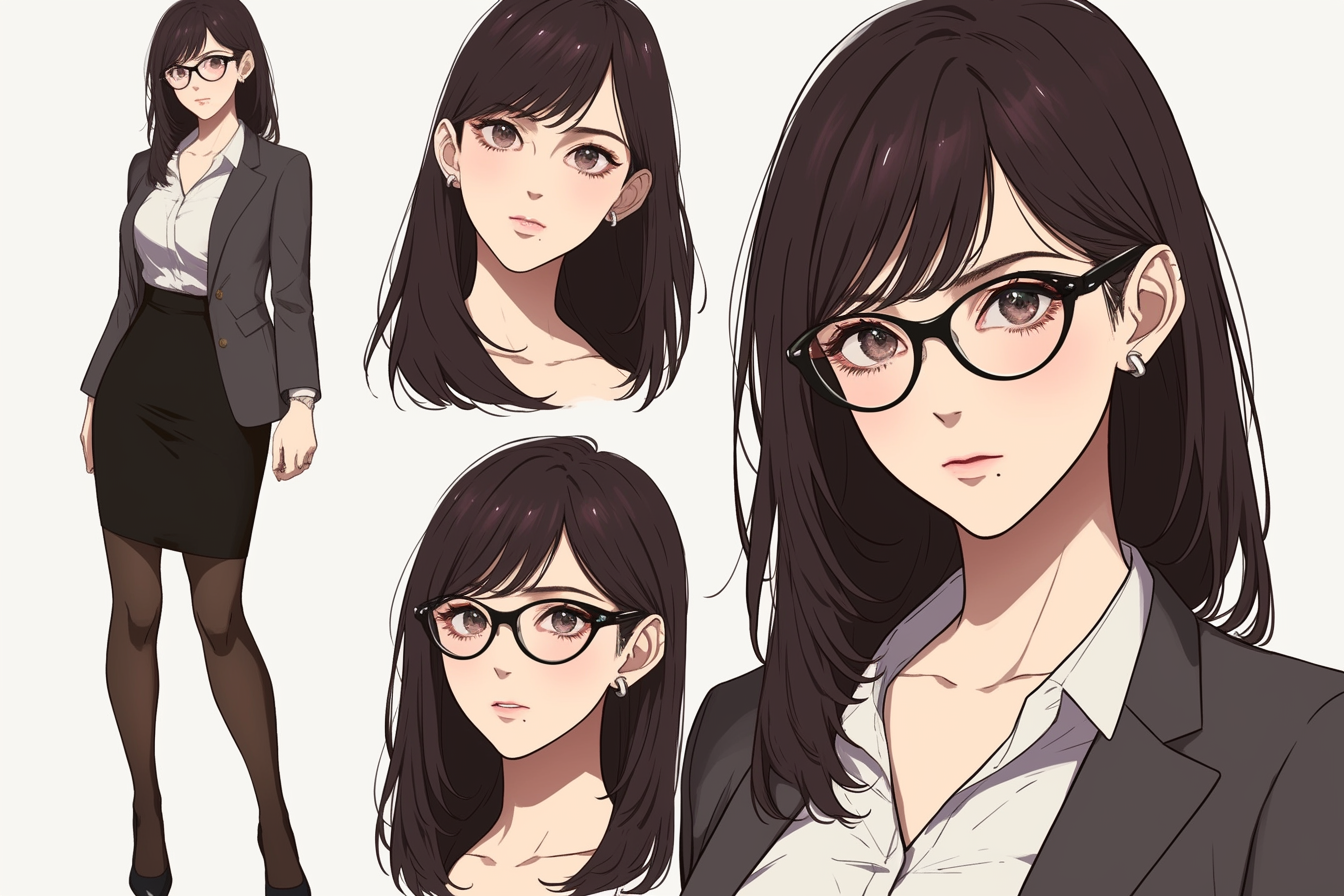

Visual Design & Art Direction

Corporate eLearning has an aesthetic problem. Stock photos of people in blazers pointing at whiteboards don't create emotional engagement, and they actively signal "skip this." I chose a visual novel aesthetic deliberately. It's a format built for dialogue-driven storytelling with character expression states, which maps perfectly onto a branching conversation scenario. It differentiates the piece immediately and signals creative intentionality.

Palette

Typography

Serif for titles, clean sans-serif for body/dialogue

Dimensions

1280 × 720, 16:9

Final Character Design: Expression States

Storyline Technical Build

Modern player style with most navigation stripped except volume control. No seekbar, no menu. The learner progresses through dialogue and choices only, reinforcing the visual novel feel.

Under the hood, the module runs on a lightweight variable system that tracks what the learner chooses, how Yuki is responding, and what score tier they'll land in at debrief.

Variable Architecture

scoreCumulative across all decision pointsyuki_stateTracks character receptivenessdp1_choice–dp4_choiceStores labels for results

Trigger Logic

- Best: score +3, yuki_state +1

- Acceptable: score +2, yuki_state unchanged

- Poor: score +1, yuki_state -1

Character State System

Each reaction slide has a single Yuki image object with 3 built-in states (Receptive, Neutral, Guarded). On timeline start, conditional triggers read yuki_state and switch to the appropriate state, so the learner sees Yuki's body language shift based on their cumulative choices.

Debrief System

Score tier display uses a single text box with 3 states set by conditional triggers. Dynamic score shown via %score% variable reference with typewriter effect on narrator text. The debrief closes with the SBI+I framework (Situation, Behavior, Impact, Intent). By this point the learner has already experienced why each element matters through their choices, so the framework lands as a codification of what they just lived through.

Results Page Integration

A JavaScript trigger on the final slide reads all variables via GetPlayer(), constructs a URL with score and choice data as query parameters, and opens an external results page. That page parses the parameters and renders a choice review panel showing what the learner picked vs. the optimal response at each decision point, all in the same navy/amber design system.

Tools & Credits

| Tool | Use |

|---|---|

| Articulate Storyline 360 | Module authoring, interactions, triggers, states |

| Midjourney (Niji 6) | Character sprites and background art generation |

| Photoshop | Image tweaks and cleanup on Midjourney output |

| Remove.bg | Background removal on character assets |

| Claude (Anthropic) | Build planning, prompt engineering strategy, Storyline troubleshooting, results page code |

| GitHub Pages | Hosting and deployment |

See It in Action

Experience the branching scenario yourself. Make the choices, see the consequences, and explore the debrief.

Play the Module